In fact, log monitoring solutions using Elasticsearch, Fluentd, and Kibana are also known as the EFK Stack. That's why we combine Elasticsearch as a long-term storage for logs and metrics, and Kibana as a visualization tool. Therefore, Fluentd also needs a long-term storage system. Fluentd exists between various log sources and the storage layer that stores the collected logs, and is similar to Logstash in the Elastic Stack.

Fluentd is an open source log collection tool that has been known for a long time, and it is also very popular. In order to eliminate those limitations, users should either go with clustered storage in Prometheus itself, or use the Prometheus interfaces that allow integrating with remote storage systems. However, when it comes to storage, Prometheus has some limitations in its scalability and durability since its local storage is limited to a single node. Prometheus uses a pull model that scrapes metrics from endpoints and ingests them into Prometheus server. Using Prometheus and FluentdĪs you know, Prometheus is a very popular open source project as a metric toolkit, which holds a dominant position especially in the recent metric monitoring for Kubernetes environments. In this blog, we will explore how to monitor Kubernetes using Prometheus and Fluentd in conjunction with the Elastic Stack.

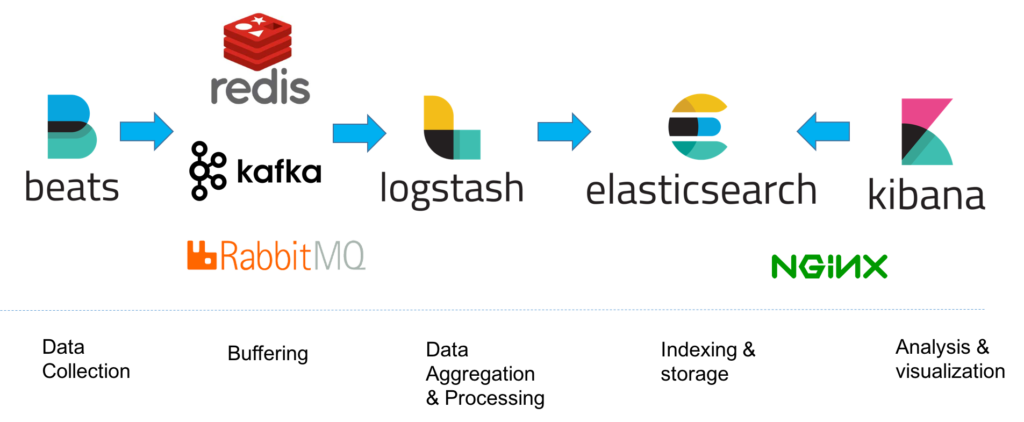

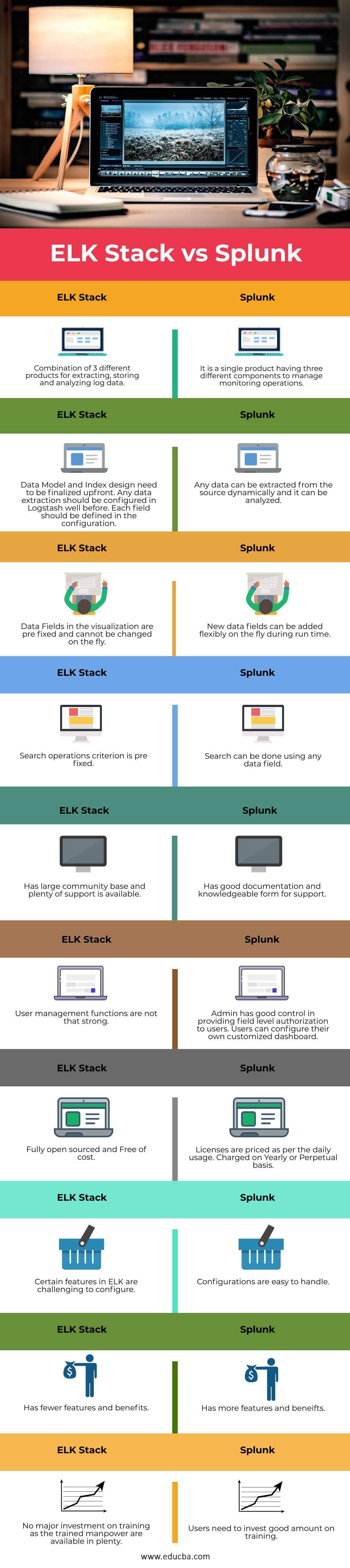

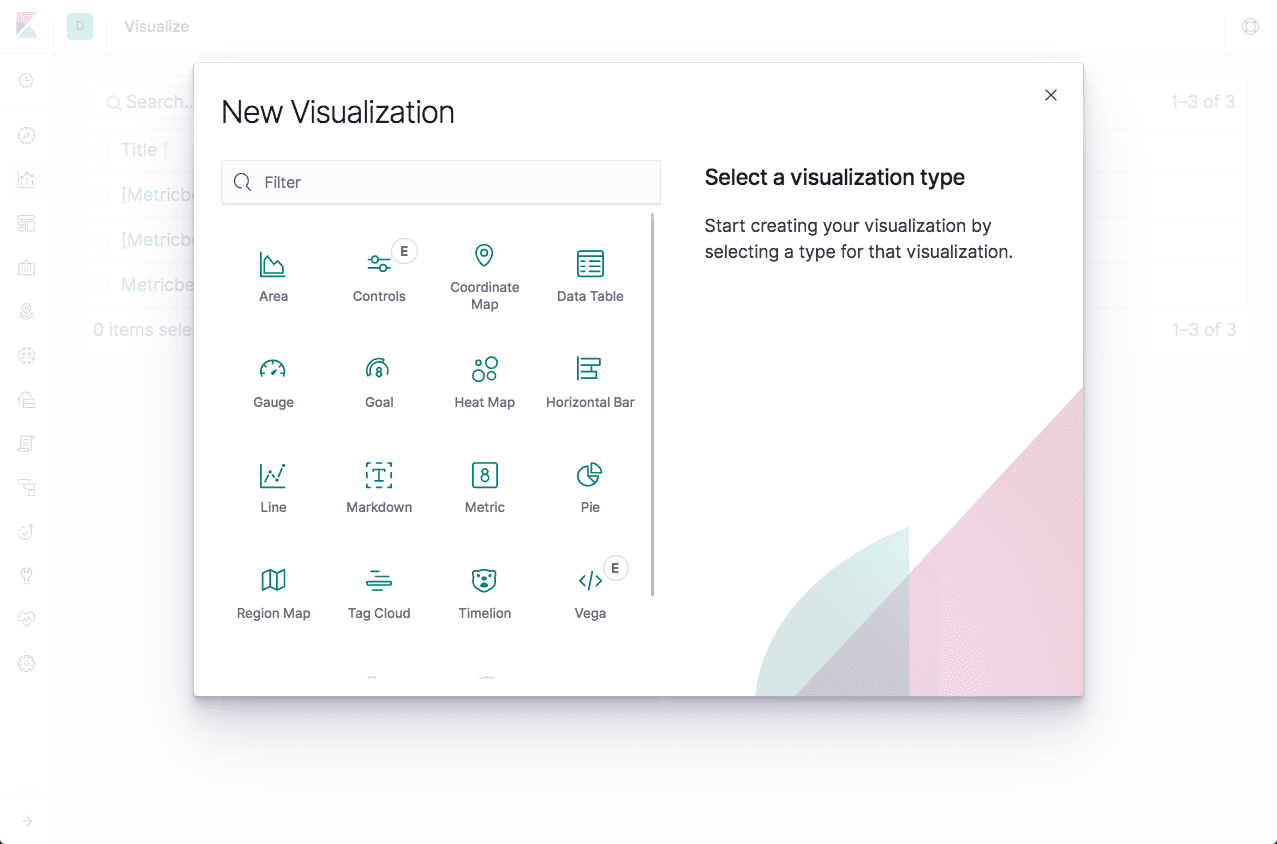

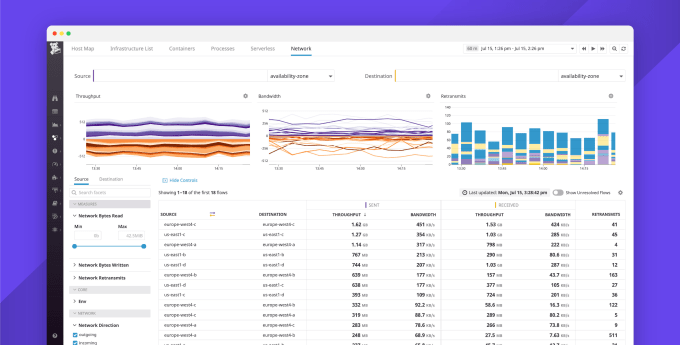

While Elastic Observability can be used to establish observability for Kubernetes environments, many users also want to use the open source-based monitoring tools they already have. Fortunately, Elastic has been widely used for infrastructure and application monitoring solutions for many years - both as the ELK Stack (Elasticsearch, Logstash, and Kibana) and more recently as Elastic Observability. But on the other hand, this has made monitoring applications and their underpinning infrastructure more complex. The shift from monolithic applications to microservices brought by Kubernetes has enabled faster deployment, where dynamic environments become commonplace. We will leverage the prometheus go client to expose metrics and create them.Kubernetes is an open source container orchestration system for automating computer application deployment, scaling, and management, and seems to have established itself as the de facto standard in this area these days.

Here's an example of how you might use the Go client library to expose metrics on an HTTP endpoint. Prometheus, an open-source systems monitoring and alerting toolkit, has client libraries that allow you to instrument your services in a variety of languages. Also, APISIX integrates with Prometheus through its plugin that exposes upstream nodes (multiple instances of a backend API service that APISIX manages) health check metrics on the Prometheus metrics endpoint typically, on URL path /apisix/ prometheus/metrics. Monitor API Health Check with PrometheusĪPISIX has a health check mechanism, which proactively checks the health status of the upstream nodes in your system.If needed Prometheus can be deployed to gather metrics from nodes and applications. Run kubectl top to get the metrics of a K8s component. This aids in troubleshooting and performance optimization. Monitoring and Logging: Middleware often includes monitoring and logging components like ELK Stack, Prometheus, and Grafana to track the health, performance, and behavior of microservices. If one of the nodes starts to fail, responding faster or slower than usual, it might indicate a change in. Enable the API health check feature to monitor continuously the overall health of upstream nodes. If a previously available route suddenly starts returning 404 errors, it's a potential sign that the API has undergone a change or an endpoint has been deprecated. Monitor the routes passing through the gateway. How to prevent breaking API changes with API Gateway.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed